Bidirectional Learning for Domain Adaptation of Semantic Segmentation

Lu Yuan2

UC San Diego1, Microsoft2

Overview

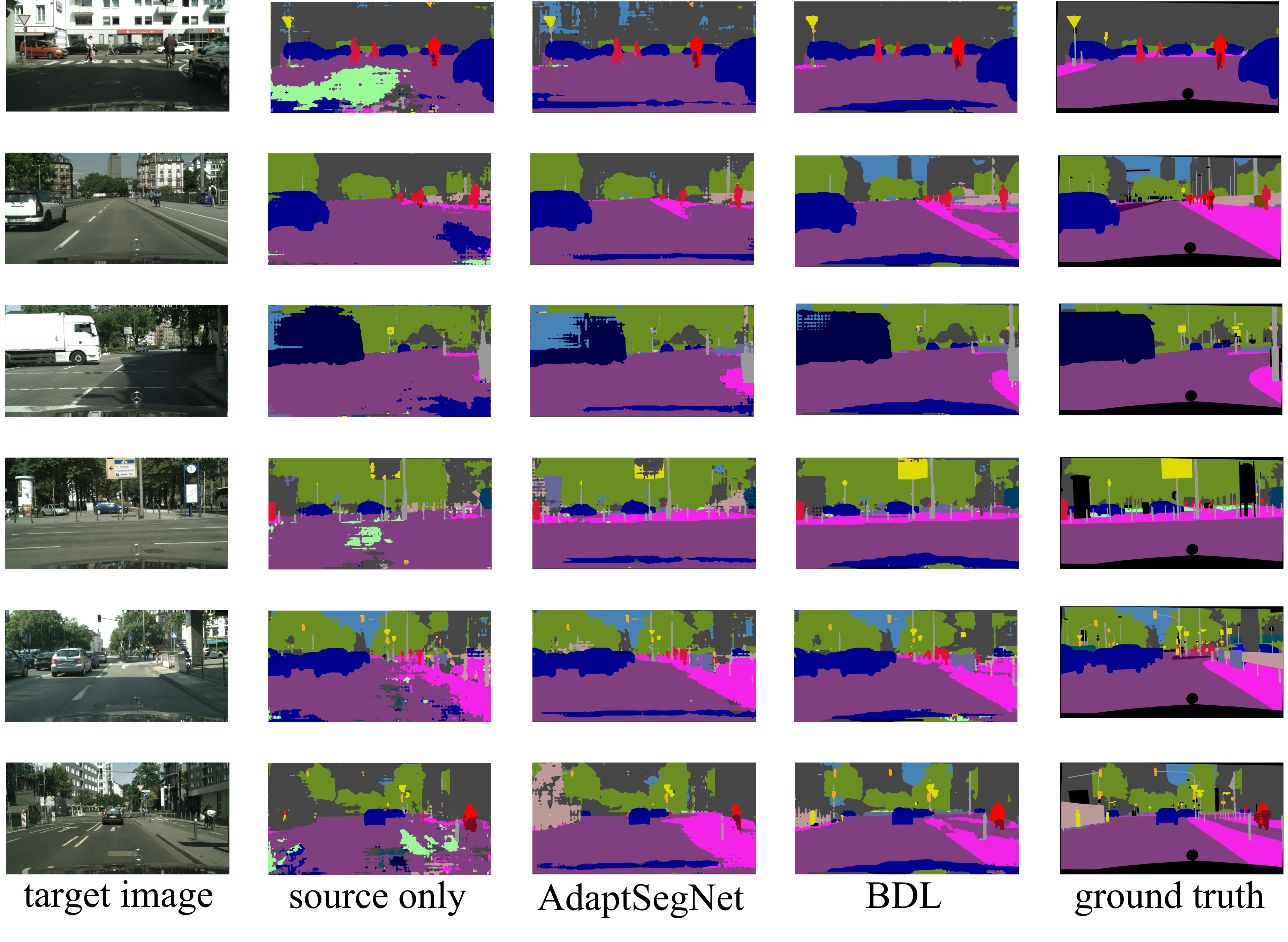

Domain adaptation for semantic image segmentation is very necessary since manually labeling large datasets with pixel-level labels is expensive and time consuming. Existing domain adaptation techniques either work on limited datasets, or yield not so good performance compared with supervised learning. In this project, we propose a novel bidirectional learning framework for domain adaptation of segmentation. Using the bidirectional learning, the image translation model and the segmentation adaptation model can be learned alternatively and promote to each other. Furthermore, we propose a self-supervised learning algorithm to learn a better segmentation adaptation model and in return improve the image translation model. Experiments show that our method is superior to the state-of-the-art methods in domain adaptation of segmentation with a big margin.

Highlights

Forward direction: the images are translated to the target domain and forwarded to the segmentation model. Backward direction: the segmentation model is fixed and served as a perceptual loss for learning the image translation model.

Benefit

- The gap between source and target domain can be iteratively reduced.

- The framework can be transferred from source to target as well as from target to source.